Your days of peeking at someone else’s text conversations on the train might be numbered. At the Neural Information Processing Systems conference in Long Beach, California, next week, Google researchers Hee Jung Ryu and Florian Schroff will present a project they’re calling an electronic screen protector, where a Google Pixel phone uses its front-facing camera and eye-detecting artificial intelligence to detect whether more than one person is looking at the screen.

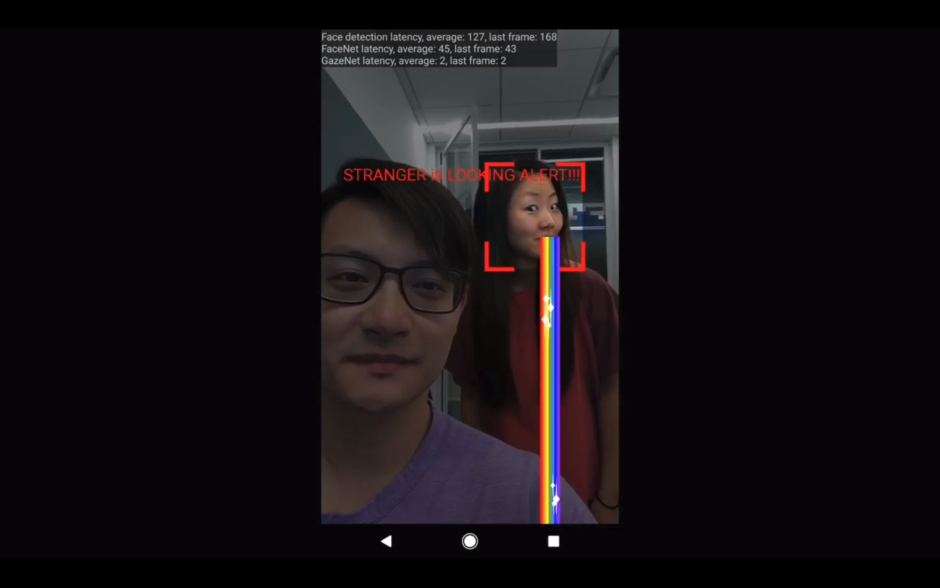

An unlisted, but public video by Ryu shows the software interrupting a Google messaging app to display a camera view, with the peeking perpetrator identified and given a Snapchat-esque vomit rainbow.

Ryu and Schroff claim the system works with different lighting conditions and poses, and can recognize a person’s gaze in 2 milliseconds. Ostensibly, this AI software is able to work so quickly because it’s being run on the phone, rather than sent for processing on the company’s powerful cloud servers.

Google has recently made a large push to make it easier for developers to integrate artificial intelligence into Android and other mobile devices, releasing a tool called TensorFlow Lite. The AI software library takes much less storage and computing power than Google’s standard AI tools released in late 2015, called TensorFlow. Just today, the company released a new API to make it easier for developers to use TensorFlow Lite in their own mobile apps.

This isn’t Google’s first foray into gaze detection, either. The company holds a 2003 patent for tracking a computer mouse pointer with a person’s vision, as well as Pay-Per-Gaze ad tracking.

The new gaze-detection software has big privacy implications. The researchers note that it could be turned on when reading sensitive information or watching video in a public place.

That might come in handy for people who frequently use their phone in crowded places, such as on subway trains.