YouTube has announced tougher guidelines on hate content in videos, strengthening its response to three categories. Following a backlash around brand advertising on controversial content, the social media platform is making a move to clean up which videos are part of its ad network.

It first promised to take action in March, ensuring that hateful content cannot be monetized. The move was in response to an ad boycott after major brands found their ads embedded in – or appearing alongside – offensive videos.

The Google-owned platform has updated the guidelines that govern which You Tube videos can run ads to prevent previous mismatches and assuage both its community of video makers and advertisers. High-profile YouTubers were up in arms last year after the website determined that some content of the more controversial end of the scale was not suitable for advertising following complaints from brands. The issue spilled over into 2017, after Disney and others cut ties with prolific YouTube star Pew Die Pie over his use of anti-Semitic content in some videos.

The strengthened guidelines appear in the Creator Academy. YouTube product management VP Ariel Bardin told The Verge that the company is now taking a tougher stance on:

Hateful content: Content that promotes discrimination or disparages or humiliates an individual or group of people on the basis of the individual’s or group’s race, ethnicity, or ethnic origin, nationality, religion, disability, age, veteran status, sexual orientation, gender identity, or other characteristic associated with systematic discrimination or marginalization.

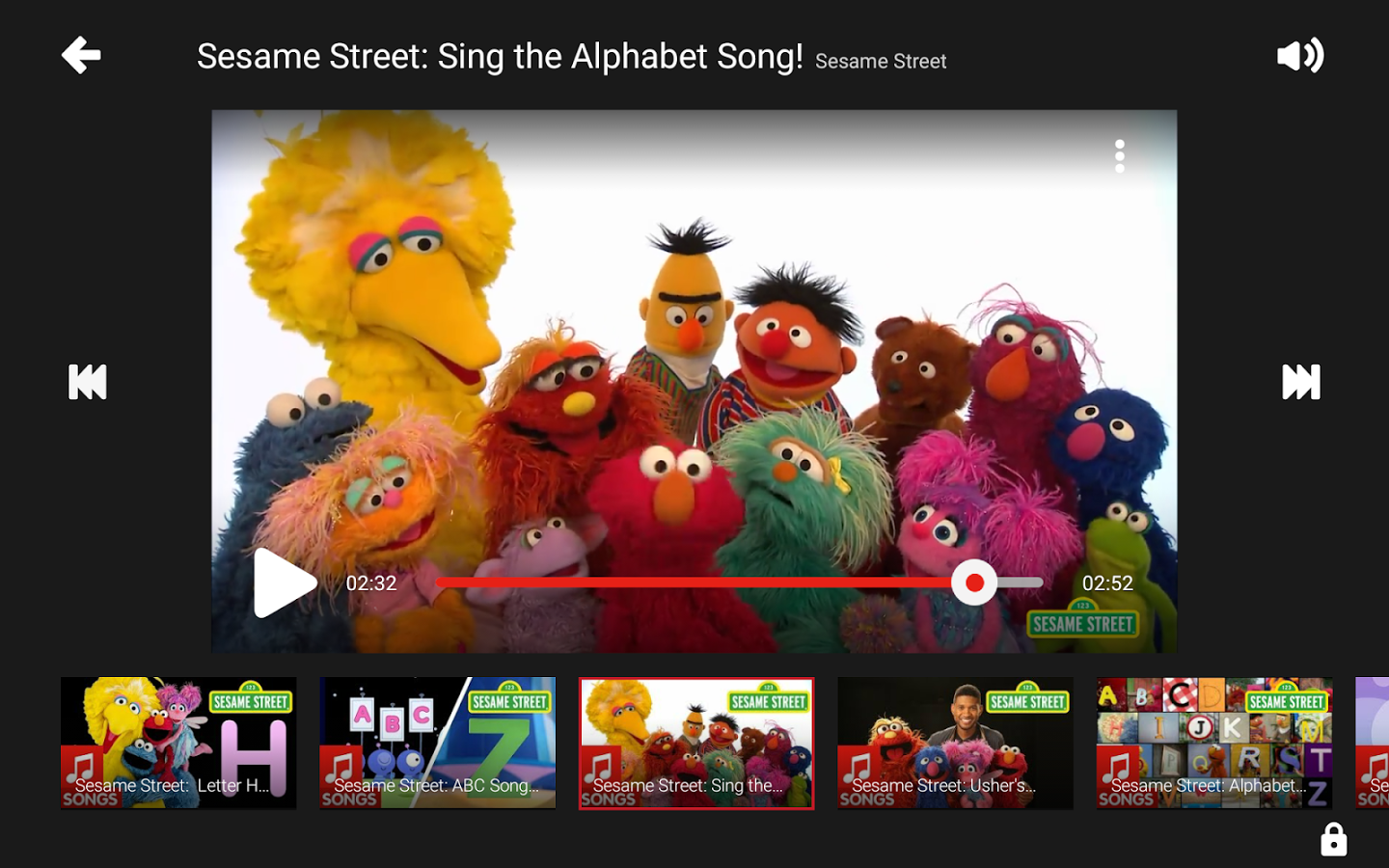

Inappropriate use of family entertainment characters: Content that depicts family entertainment characters engaged in violent, sexual, vile, or otherwise inappropriate behavior, even if done for comedic or satirical purposes.

Incendiary and demeaning content: Content that is gratuitously incendiary, inflammatory, or demeaning. For example, video content that uses gratuitously disrespectful language that shames or insults an individual or group.